Measure what matters

Define custom evaluation metrics aligned to your agent's actual performance goals. Stop relying on generic benchmarks—create benchmarks specific to your use case.

Stop shipping agents blind.

LangSmith benchmarks let you systematically evaluate agent quality, compare versions, and identify what actually drives performance improvements. Benchmark against datasets and production traffic.

Try LangSmith free. No credit card required.

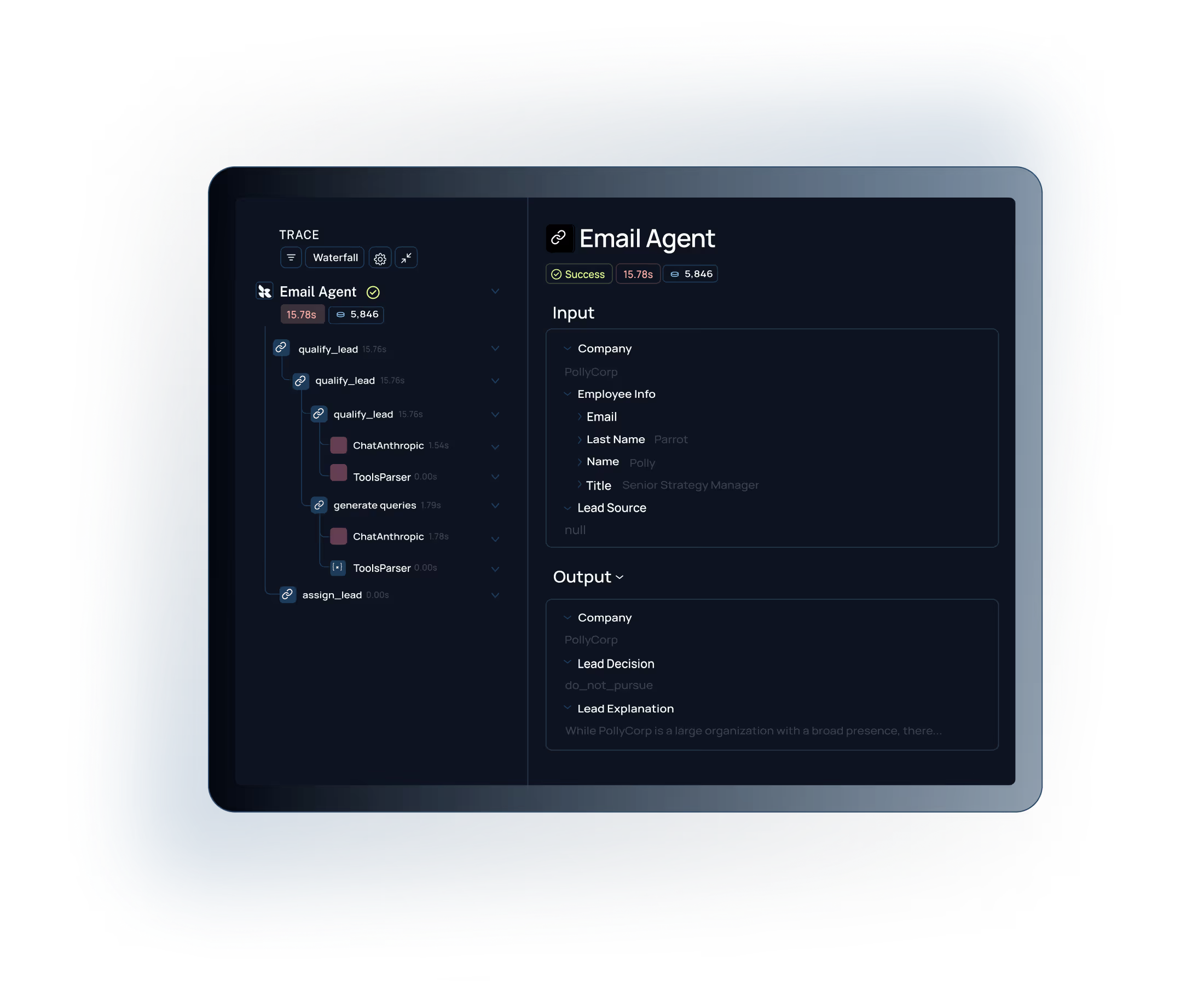

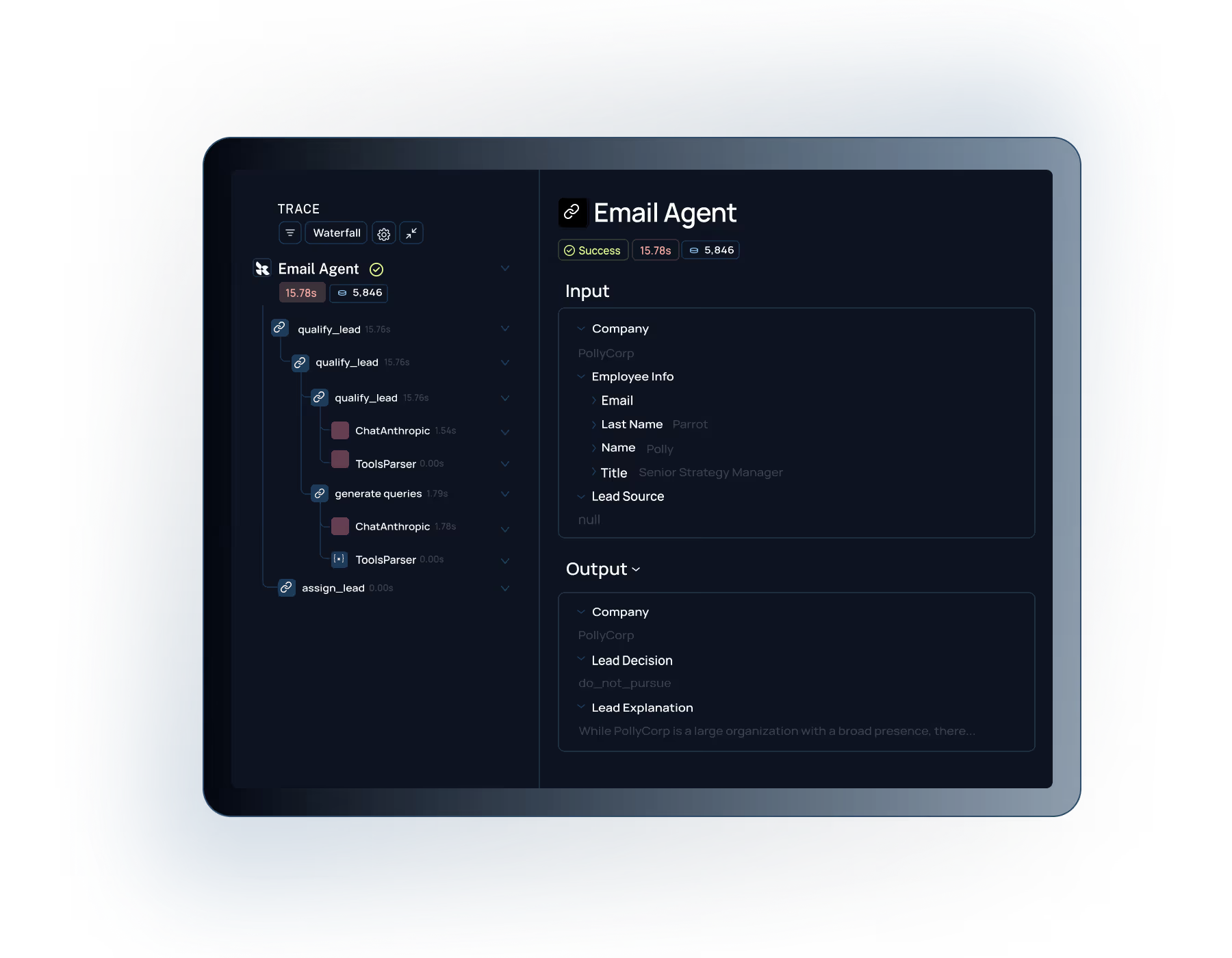

Instrument your agents to capture traces. LangSmith records every decision, tool call, and output for evaluation.

Create evaluation datasets and metrics aligned to your agent's goals. Benchmark offline against known examples.

Run evals on production traffic to catch quality drops. Use results to iterate and ship better agent versions.

.svg)

.svg)

.svg)

.svg)

.svg)

Teams trust LangSmith to benchmark and improve their agent performance

Evaluate agent quality systematically with offline and online evaluations

Agent benchmarking starts with visibility. LangSmith tracing captures every step, tool call, and decision your agent makes. This gives you the raw signal you need to build reliable evaluation datasets and identify what's driving performance.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

Define custom evaluation metrics aligned to your agent's actual performance goals. Stop relying on generic benchmarks—create benchmarks specific to your use case.

Run controlled evaluations comparing agent versions side-by-side. See exactly what impact prompt changes, tool additions, and model switches have on quality.

Monitor live agent quality with online evaluations on production traffic. Catch regressions immediately instead of hearing about them from users.

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working, whether that meant running pairwise evaluations or digging into why accuracy jumped from 70% to 80%. Our engineers especially love the intuitive debugging experience, it's saved us a lot of time."

"LangSmith's evaluation capabilities let us systematically improve our agent performance. We went from 70% to 80% accuracy by using LangSmith to identify which agent design choices actually mattered. The ability to benchmark against our production data is critical—we can see exactly what changes drive real improvements."

Learn how to systematically evaluate agent quality with LangSmith's benchmarking and evaluation tools.