Systematic measurement

Move beyond ad-hoc testing. Run reproducible evals on consistent datasets and measure quality improvements with statistical confidence.

Run offline and online evals to measure agent performance.

Catch quality issues before they reach users and iterate with confidence using LangSmith's comprehensive evaluation framework.

Try LangSmith free. No credit card required.

Build eval datasets from production traces or manual examples. LangSmith makes it easy to curate representative test cases.

Execute evaluations with custom metrics, semantic similarity scorers, and LLM-as-judge evaluation. Get instant feedback on quality.

Compare eval results across experiments. Ship improvements with confidence knowing they're backed by rigorous evaluation data.

.svg)

.svg)

.svg)

.svg)

.svg)

Teams rely on LangSmith evaluations to measure and improve AI quality at scale

Run offline and online evaluations to measure and continuously improve AI quality

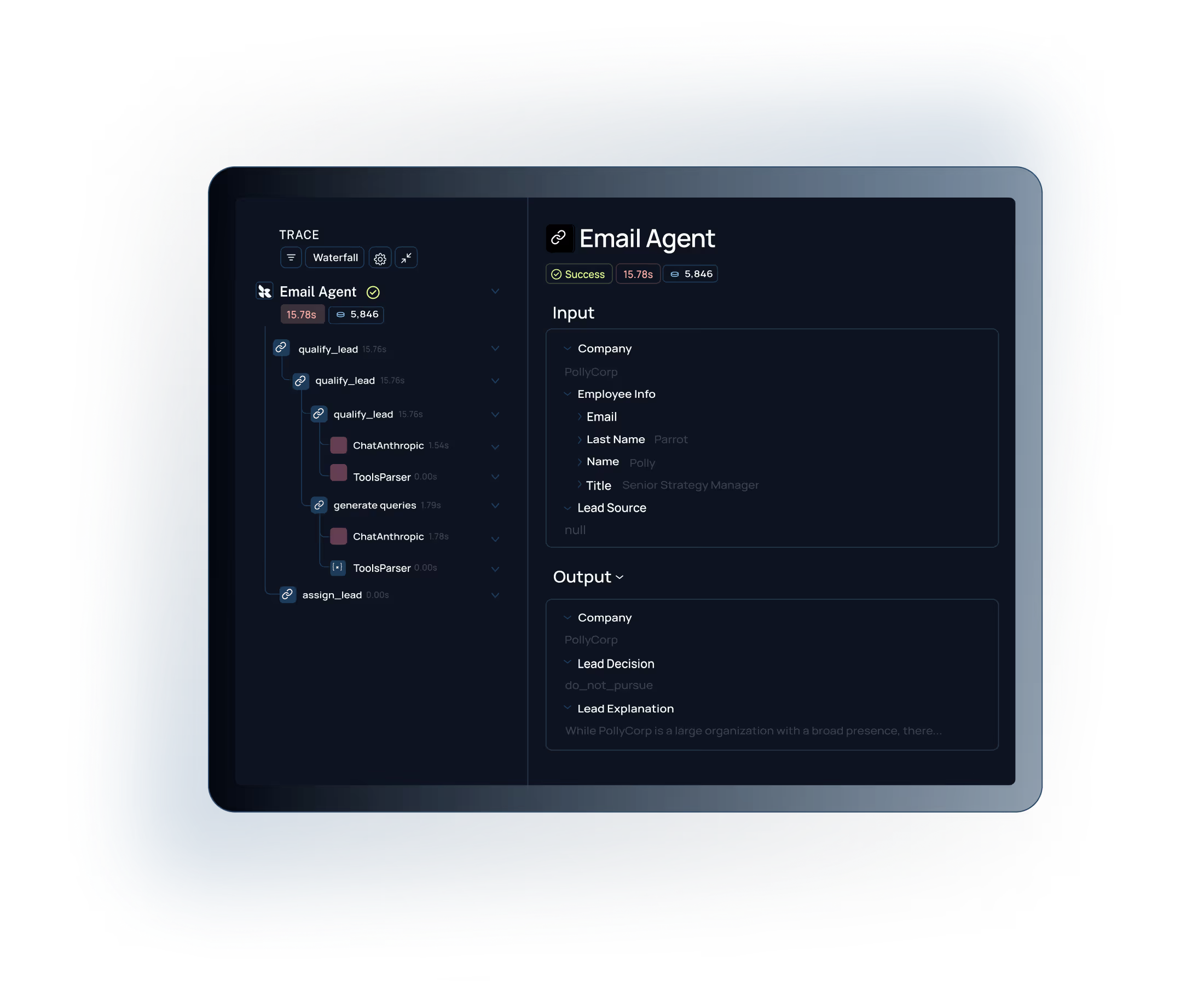

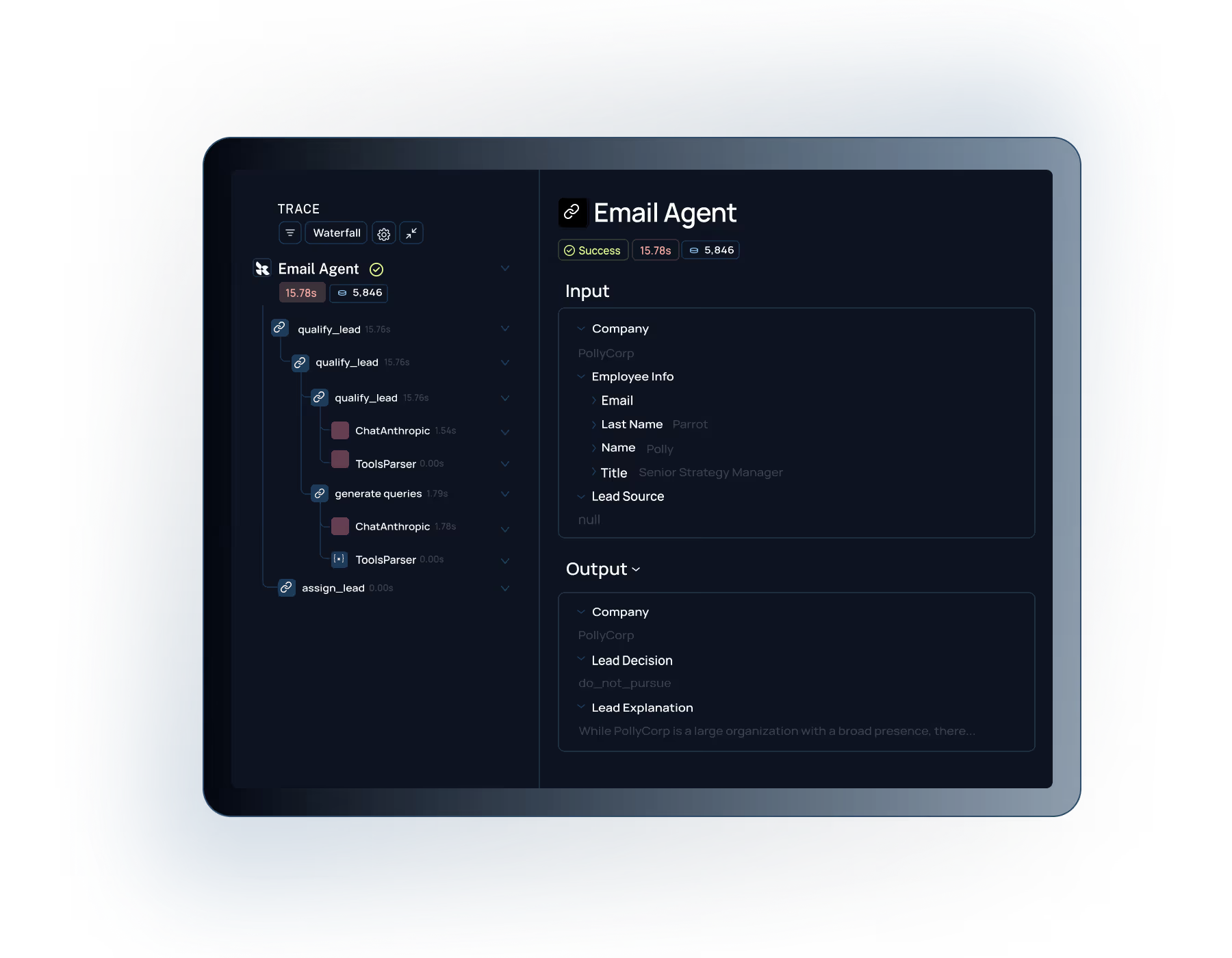

Run evals on production traces to measure real-world performance. LangSmith's tracing gives you the visibility to understand agent decisions and catch quality issues in context.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

Move beyond ad-hoc testing. Run reproducible evals on consistent datasets and measure quality improvements with statistical confidence.

Close the feedback loop from production signals to improvements. Evaluate changes before shipping and learn what actually works.

Your eval datasets automatically grow from production traces. Evaluations always stay relevant to real user behavior.

"Working with LangSmith on the Elastic AI Assistant had a significant positive impact on the overall pace and quality of our development and shipping experience. We couldn't have delivered the product experience our customers now have without LangSmith—and we couldn't have done it at the same pace without it."

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working, whether that meant running pairwise evaluations or digging into why accuracy jumped from 70% to 80%. Our engineers especially love the intuitive debugging experience, it's saved us a lot of time."

See how LangSmith's evaluation framework helps teams systematically measure and improve AI quality.