Data-driven decisions

Measure what actually matters for your models. Replace gut-feel improvements with objective scoring that shows real progress on business metrics.

Stop guessing about model quality.

Systematically test and score your AI models with LangSmith's automated evaluation framework. Benchmark performance before shipping and track improvements in production.

Try LangSmith free. No credit card required.

Create custom scorers that measure what matters for your use case. Use LLM-based graders, deterministic rules, or your own logic.

Score test datasets before shipping. Then run continuous evals on production traces to catch quality drops automatically.

Compare model versions objectively. Iterate on prompts and parameters knowing exactly how changes impact your metrics.

.svg)

.svg)

.svg)

.svg)

.svg)

Teams trust LangSmith to systematically evaluate and improve their AI models at scale

Systematically test, score, and improve your AI models with data-driven evaluation

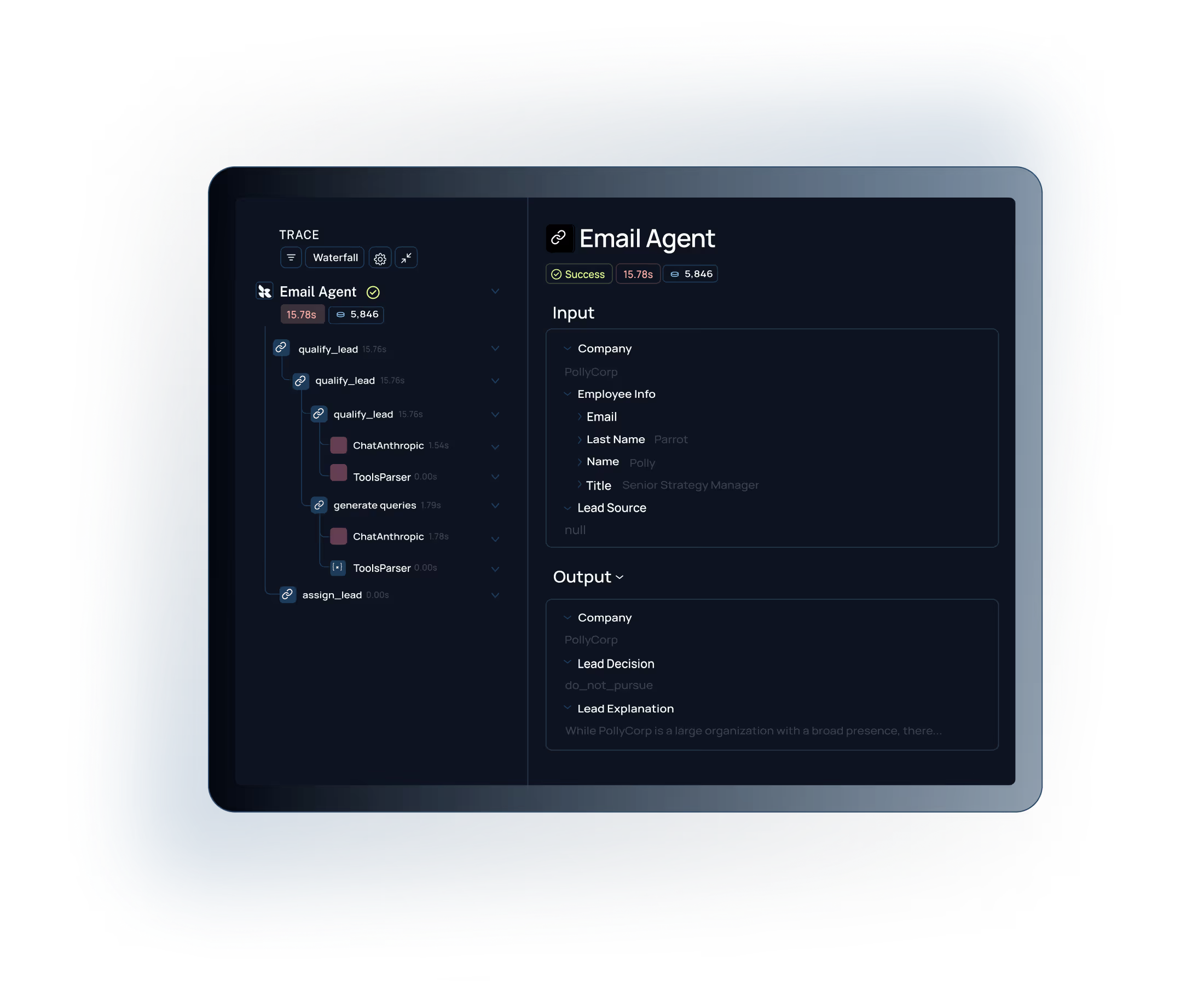

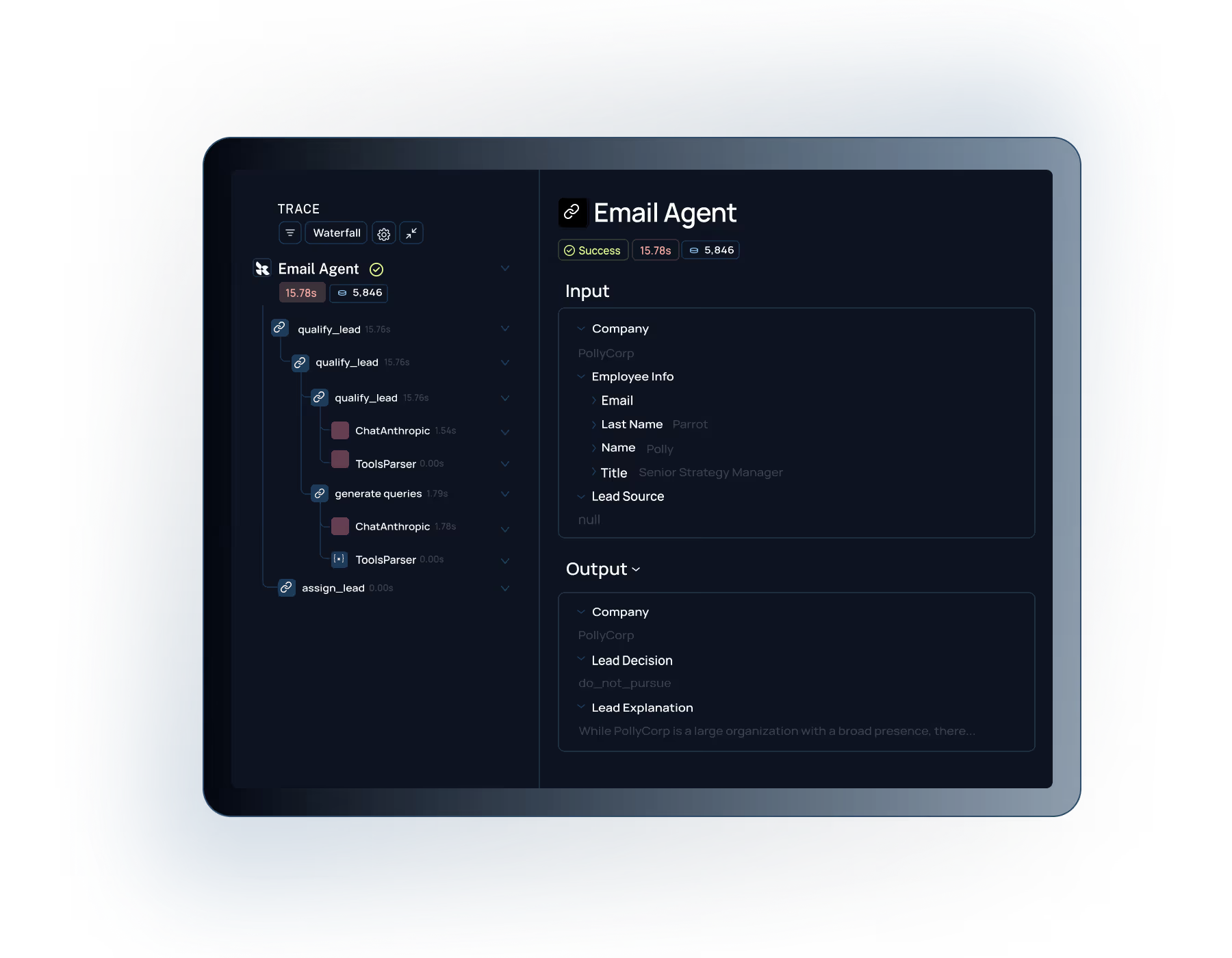

Every model output creates detailed traces that reveal exactly what your model is doing at each step. Trace execution paths, inputs, and outputs to identify issues and opportunities for improvement.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

Measure what actually matters for your models. Replace gut-feel improvements with objective scoring that shows real progress on business metrics.

Detect quality drops before they impact users. Automated evaluation on every change ensures new versions don't regress on critical metrics.

LangSmith evals work with any LLM, fine-tuned model, or custom code. Framework agnostic evaluation that fits your stack.

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working. Our engineers especially love the intuitive debugging experience—it's saved us a lot of time."

"LangSmith had a significant positive impact on the overall pace and quality of our development and shipping experience. The evaluation and testing capabilities let us confidently ship complex AI features at scale."

See how LangSmith evaluations help you measure, test, and improve your AI models with systematic scoring and quality benchmarking.