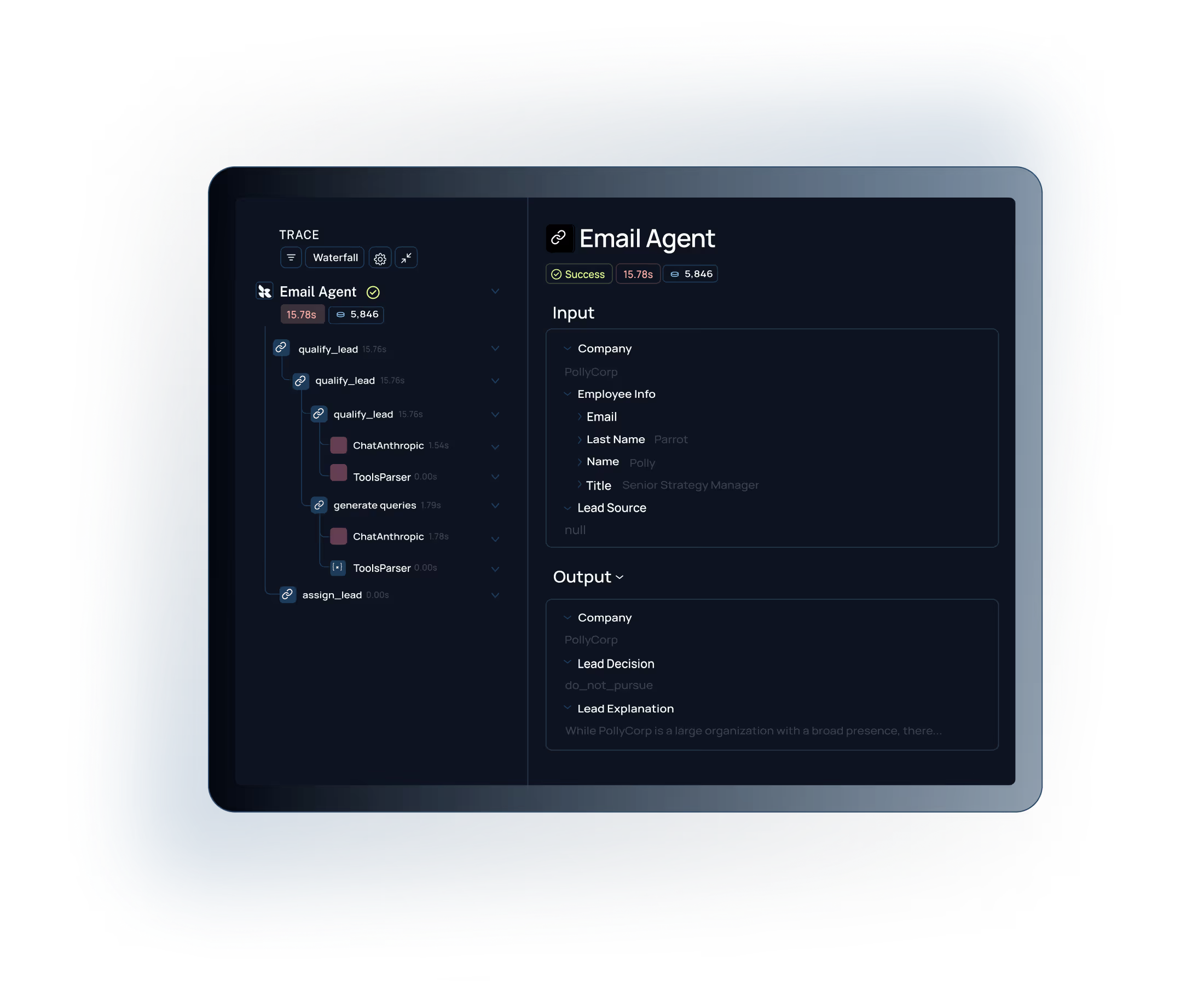

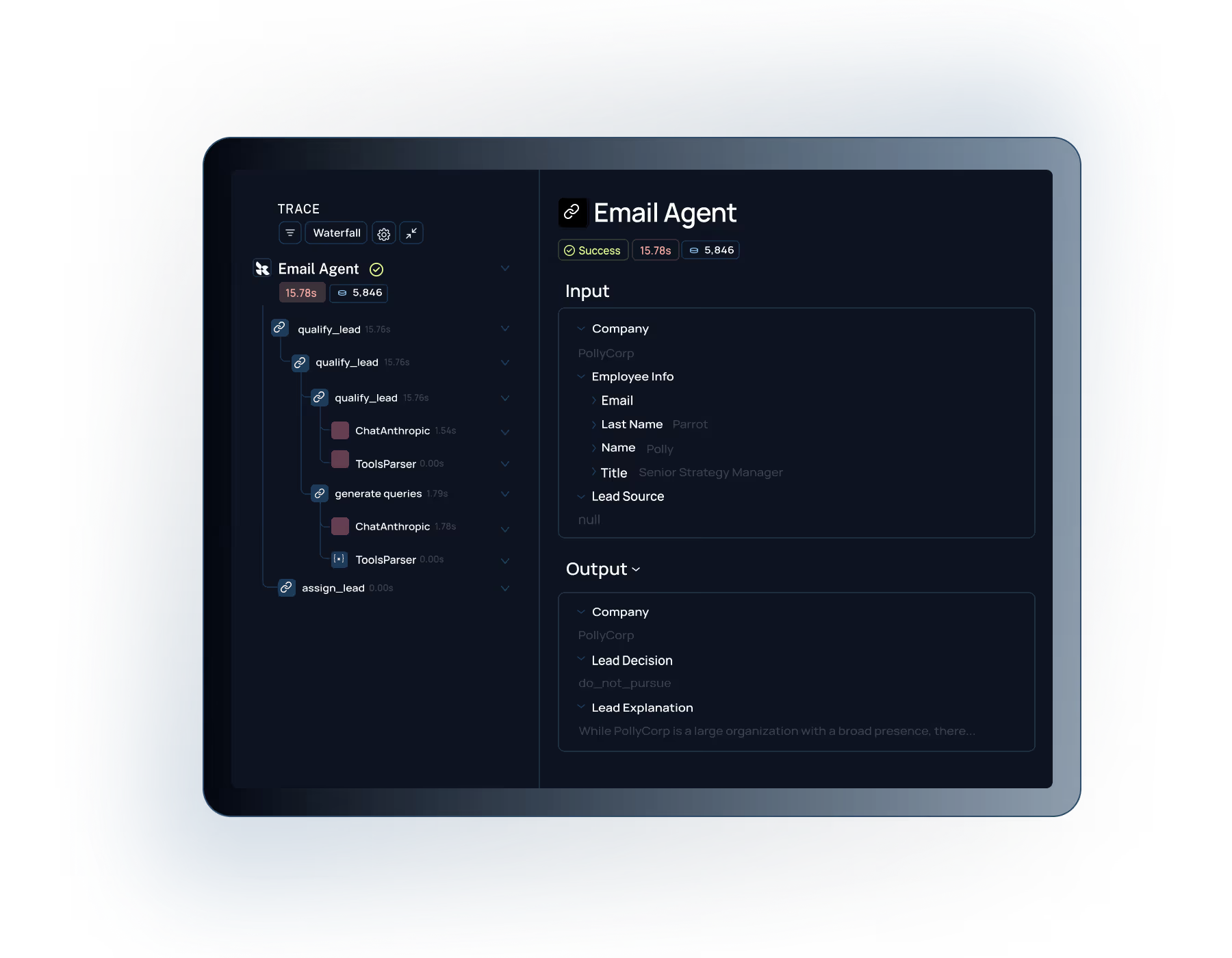

Visibility & control

See exactly what's happening at every step of your LLM application. Debug issues and understand behavior instantly.

Go beyond monitoring infrastructure.

With LangSmith, you can debug hallucinations and improve LLM performance with tracing, monitoring, alerting, and evaluation.

Try LangSmith free. No credit card required.

.svg)

.svg)

.svg)

.svg)

.svg)

Add a few lines of code to trace your LLM app. Works with OpenAI, Anthropic, LangChain, or any custom stack.

Pinpoint prompt issues, retrieval failures, and model errors at the trace level. No more log diving.

Score production traffic with online evals. Turn every failure into a test case for continuous improvement.

Teams trust LangSmith to run their most important applications

Get complete visibility to drive agent performance and improvement

Agents create dense outputs that make debugging hard. Tracing gives you clear visibility into each step, so you can confidently explain what your agent is actually doing.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

See exactly what's happening at every step of your LLM application. Debug issues and understand behavior instantly.

Rapidly move through build, test, deploy, learn, repeat with workflows across the entire LLM engineering lifecycle.

Keep your current stack. LangSmith works with your preferred open-source framework or custom code.

"Working with LangSmith on the Elastic AI Assistant had a significant positive impact on the overall pace and quality of our development and shipping experience. We couldn't have delivered the product experience our customers now have without LangSmith—and we couldn't have done it at the same pace without it."

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working, whether that meant running pairwise evaluations or digging into why accuracy jumped from 70% to 80%. Our engineers especially love the intuitive debugging experience, it's saved us a lot of time."

See how LangSmith can help you monitor and debug your LLM applications with complete observability.