Measurable quality gates

Define and enforce quality metrics before shipping. Catch regressions automatically and ship with confidence.

Test LLM outputs systematically with metrics and evaluation frameworks.

Catch quality issues before production with automated evals on datasets and production traffic.

Try LangSmith free. No credit card required.

Set up evaluation criteria and metrics that matter for your use case. Choose from custom evaluators, LLM-as-judge, or human feedback.

Run evaluations on test datasets and production traffic. Compare versions and measure the impact of prompt changes and model updates.

Only deploy LLMs that pass validation gates. Use production traces to refine evaluation datasets and improve continuously.

.svg)

.svg)

.svg)

.svg)

.svg)

Teams trust LangSmith to validate and test their critical LLM applications

Validate output quality with systematic testing and measurable metrics

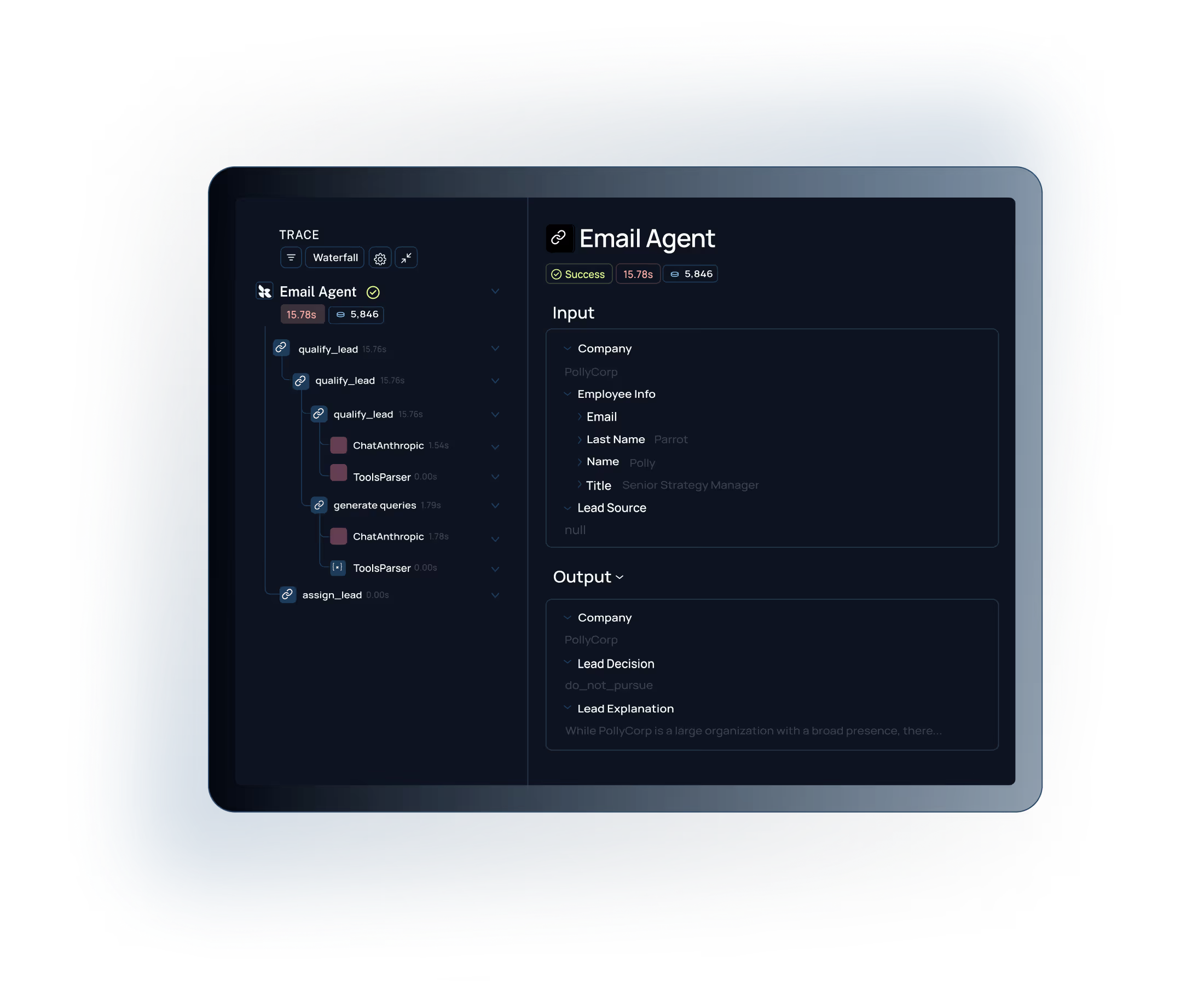

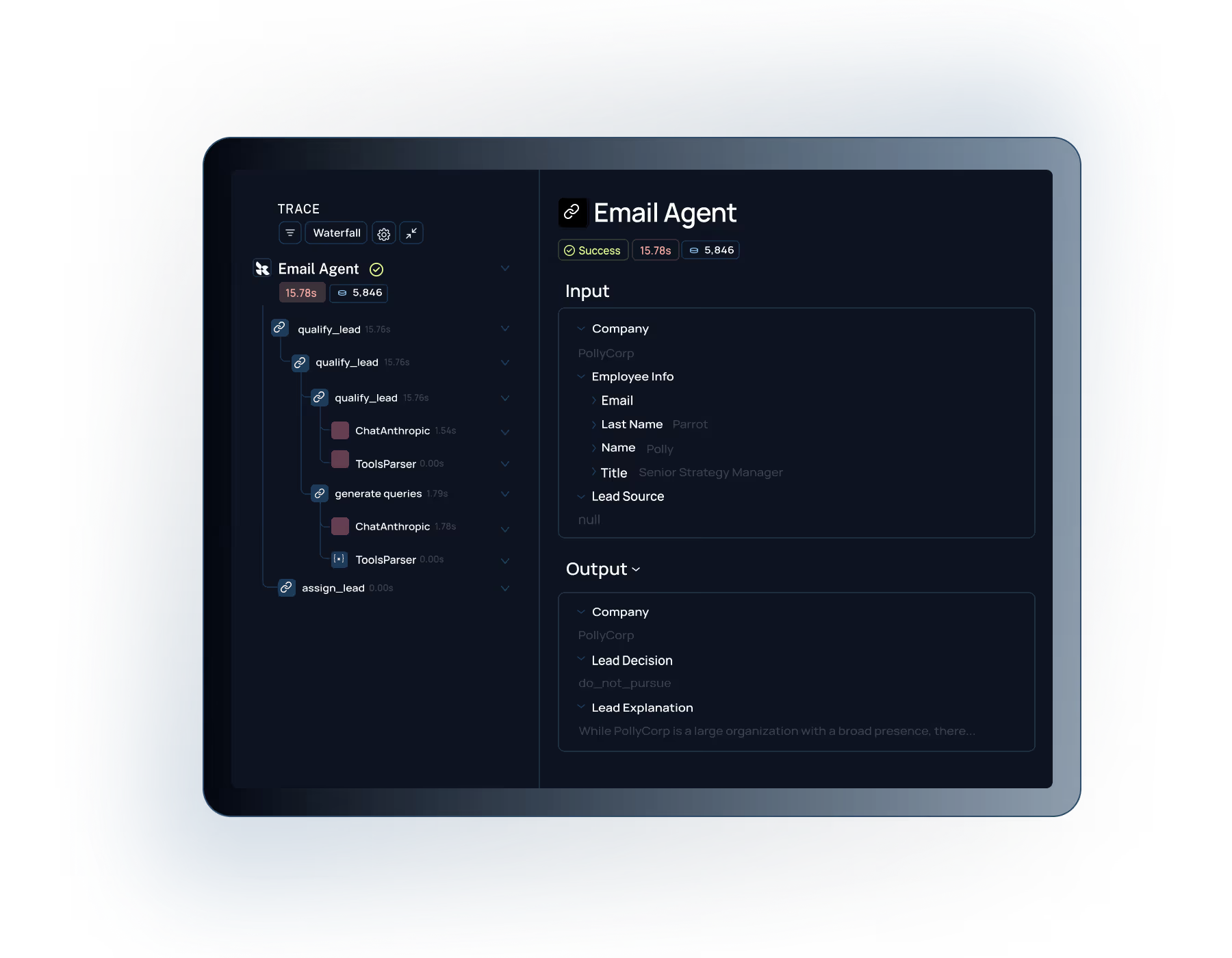

Get clear visibility into LLM outputs and behavior. Trace every input, prompt, and response to understand quality issues and identify failure patterns.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

Define and enforce quality metrics before shipping. Catch regressions automatically and ship with confidence.

Run automated evals on curated datasets and production traffic. Compare prompt versions and measure impact of changes.

Works with any LLM, framework, or custom code. Validate outputs regardless of your architecture.

"Working with LangSmith on the Elastic AI Assistant had a significant positive impact on the overall pace and quality of our development and shipping experience. We couldn't have delivered the product experience our customers now have without LangSmith—and we couldn't have done it at the same pace without it."

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working, whether that meant running pairwise evaluations or digging into why accuracy jumped from 70% to 80%. Our engineers especially love the intuitive debugging experience, it's saved us a lot of time."

See how LangSmith can help you validate LLM quality with systematic testing and evaluation frameworks.