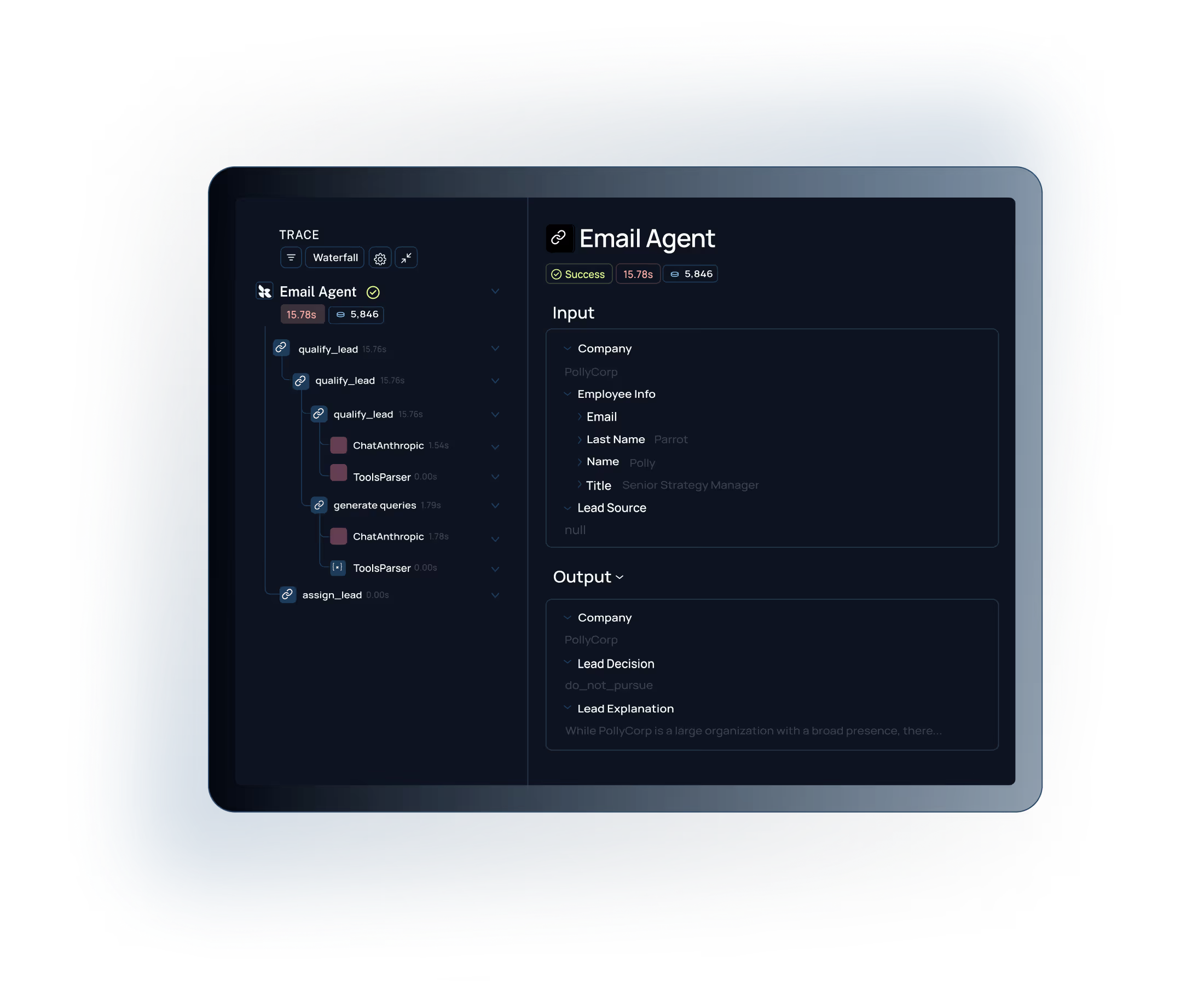

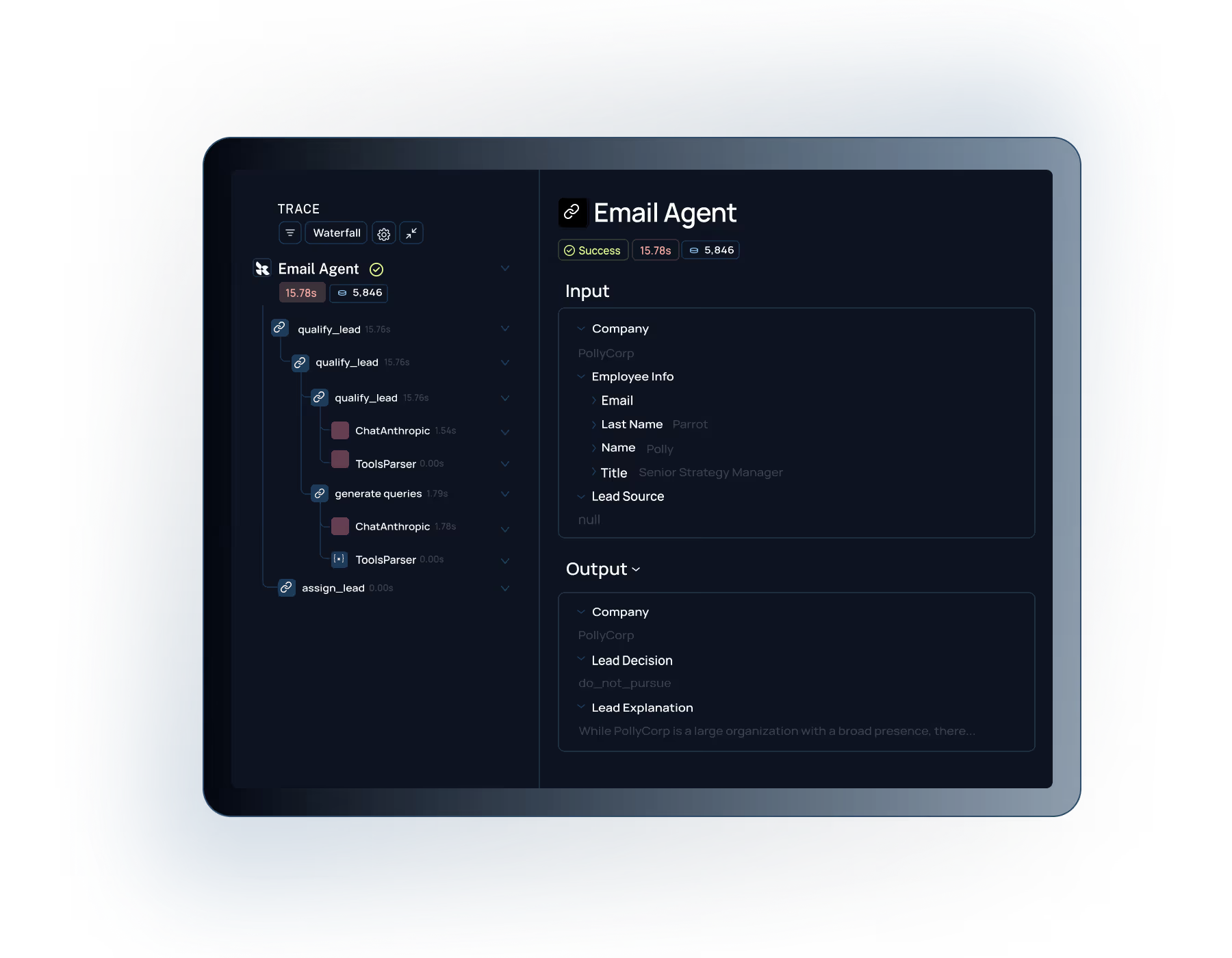

Debug context-folding failures

Pinpoint exactly where a long horizon agent went off track—which step, which tool call, which context-folding decision caused the failure—without replaying the entire run from scratch.

Long horizon agents fail in silence—losing context mid-task, making poor decisions across hundreds of steps, and drifting from their goals.

LangSmith gives you full visibility into every step, tools to debug context-folding and retrieval failures, and evals to validate reinforcement learning reward signals across the full task horizon.

Try LangSmith free. No credit card required.

Capture every step, tool call, and context-folding operation across the entire long horizon agent lifecycle. See exactly how context accumulates, compresses, and influences decisions over hundreds of steps.

Run evals that score long horizon agent behavior across the complete task—not just individual steps. Test reinforcement learning reward signals for interactive LLM agents and catch goal drift before it reaches users.

Ship long horizon agents with stateful session management and context-folding support. Monitor context growth, flag anomalous behavior, and keep extended agent runs reliable across your entire user base.

.svg)

.svg)

.svg)

.svg)

.svg)

Teams trust LangSmith to develop, evaluate, and deploy their most complex long horizon agent applications—from context-folding pipelines to reinforcement learning workflows

Debug, evaluate, and scale long horizon agents that reason and act across hundreds of steps

Trace every decision, tool call, and context-folding operation across long multi-step runs. Understand exactly where your long horizon agent lost the thread, made a wrong turn, or ran out of useful context—no more guessing from final outputs alone.

Connect with our team to see how

Built for Enterprise

LangSmith meets the demanding security, performance, and collaboration requirements of large organizations building AI applications at scale.

Role-based access control with org-level permissions and project isolation to meet your security and compliance requirements.

.svg)

Self-hosting options to maintain full control over your AI data and meet strict compliance requirements.

Pinpoint exactly where a long horizon agent went off track—which step, which tool call, which context-folding decision caused the failure—without replaying the entire run from scratch.

Test long horizon interactive LLM agents end-to-end with evals that score behavior across the complete task. Validate RL reward signals, measure goal completion rates, and catch drift before shipping.

Deploy long horizon agents designed to run for hours or days. LangSmith handles session management, context scaling via folding, and production monitoring so extended workloads stay reliable at any scale.

"Working with LangSmith on the Elastic AI Assistant had a significant positive impact on the overall pace and quality of our development and shipping experience. We couldn't have delivered the product experience our customers now have without LangSmith—and we couldn't have done it at the same pace without it."

"What we really needed was a more structured way to test new approaches, something better than just shipping and seeing what happened. LangSmith gave us a more scientific, structured way to understand what was actually working, whether that meant running pairwise evaluations or digging into why accuracy jumped from 70% to 80%. Our engineers especially love the intuitive debugging experience, it's saved us a lot of time."

See how to build, debug, and scale long horizon agents—with full observability into context-folding, reinforcement learning workflows, and every step across the full task horizon.